- The challenge of life-like appearance is significant for the future of sexbots

- Tech wizard Ishiguro’s robots are so real, they can be mistaken for humans

- Recent developments in AI promise a radical transformation of human-machine interaction

- Natural language processing and deep learning promise smart, beautiful sexbots in the next two decades

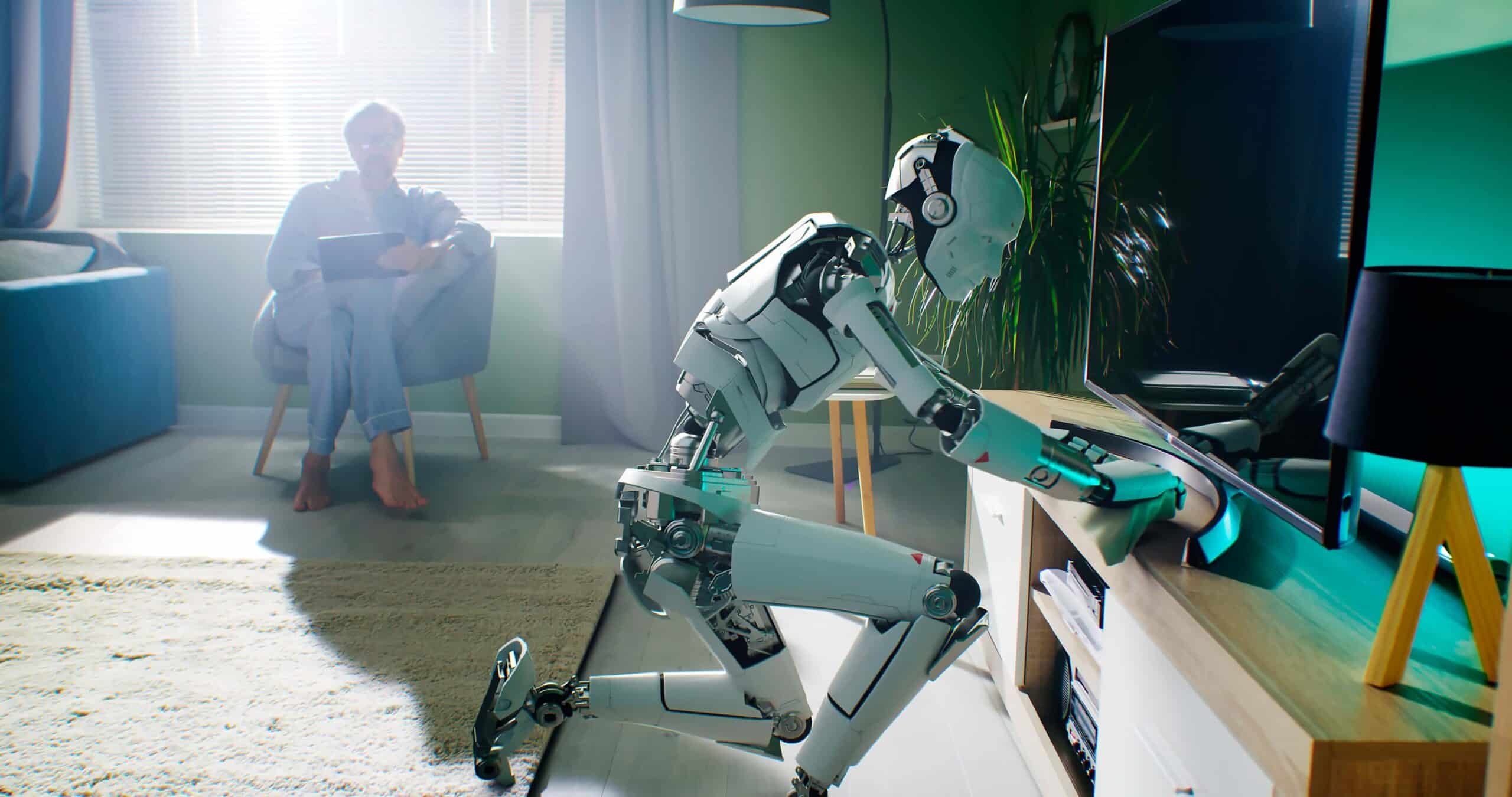

The state of the art technology in humaniform robots is more advanced than you might imagine, but still frustrated by a number of problems. For instance, bipedal systems need to be pretty advanced to simulate human movement, and the powerful servos used to facilitate movement need to be carefully tuned to sensors to avoid injury to the people it interacts with.

Research on bipedal robots continues, primarily driven by the need for disaster and emergency response robots to manoeuvre in environments with stairs, uneven footing and debris. While not human per se, they’re improving every day. Commercially available robots illustrate how smart our sensing and force limiting systems are now, allowing humans to work alongside machines that could, if not properly programmed, cause serious injury or death. But these smart robotic co-workers sense when a person is near and slow themselves down, or stop altogether, to avoid an accident.

The challenge of life-like appearance is significant for the future of sexbots

The most challenging areas for the development of androids have been the issues of hyper-realistic appearance, speech and thought. For androids in general, and sexbots in particular, to garner capital and clients, they’ll need to be a lot more than just safe and flexible. They’ll need to be attractive and smart, and these are bigger challenges than you might imagine.

The problem of aesthetics is incredibly significant for the future of sexbots. For reasons grounded in evolutionary biology, we have a hard-wired sense of revulsion towards things that are human-ish but not quite human enough. This is called the ‘uncanny valley,’ the trough of revulsion between cartoon-esque cuteness and androids that are indistinguishable from real people. Anything between these two extremes (R2D2 and C3PO on one hand, and a healthy person on the other) strikes us as deeply, viscerally creepy. This innate reaction forces android designers to get it right or go home.

Tech wizard Ishiguro’s robots are so real, they can be mistaken for humans

The crude sexbots that are commercially available now are still stuck in this trough. But the most advanced androids are so similar to people in appearance that they can be hard to distinguish from the real thing, though they’re not designed for sex but for tele-communication. The most sophisticated robots currently being designed are the brainchild of Hiroshi Ishiguro, the tech wizard of Osaka University’s Advanced Telecommunications Research Institute International. He’s demonstrating that we’re finally on the cusp of robots so real they can be mistaken for humans. And his projects demonstrate the power of touch and human presence in communication, paving the path forward for more life-like androids.

Ishiguro’s research explores the possibilities for his ‘Geminoids’ as communication tools, essentially stand-ins for real people that replicate the presence of a human being. Instead of projecting an image of Ishiguro at a tech conference via Skype, for instance, his dream is to ‘re-present’ himself, bringing his ‘presence’ to the meeting in the fullest sense and with the full impact of his personality. The key to this is startlingly life-like appearance, and the natural movement accompanying speech. Ishiguro has come a long way to reproducing the almost imperceptible eye, mouth, and facial changes that humans make to express emotion.

His Geminoids aren’t stand-alone systems. Instead, they’re a communication device that mimics the appearance and movement of a user at a distance. Nevertheless, his advances in android tech are mind-blowing.

Inspired by this article? Download our free e-book “Sexbots – the shape of things to come”, containing 85 pages of mind blowing facts, quotes, expert opinions, videos and case studies about the emergence of the sex robots.

Telenoid

His most simple, full-size robot for telecommunication is the Telenoid. As the ‘noid’ in its name suggests, the Telenoid appears human, though it is designed to blend the features of old and young and male and female in a generic, albeit Asian, form. The idea is that its homogenised appearance will allow it to stand in for any tele-connected user. Its body, really an abbreviated torso so that it can be placed in a user’s lap, is soft polyvinyl chloride, designed to be hugged and held. The Telenoid is a bit ghost-like, and to some perhaps a little creepy, but its facial expressions and huggable body encourage communication on a different level than even a videoconference might.

A range of complex, embedded sensors allow a user to ‘inhabit’ the Telenoid’s body. Embedded cameras in its eyes and mouth allow it to ‘see’ the person holding it, and embedded microphones enable it to ‘hear.’ Connected via a wireless modem, the Telenoid allows emotional communication at a distance, mimicking the facial expressions and movements of the person to whom its holder is speaking, using delicate servo-motors to produce life-like expression. And while there’s the possibility the Telenoid might not catch on, it does point the way forward. Just as video brought a new depth to voice communication, Ishiguro’s work on projecting human presence at a distance illustrates the power of touch and embodiment.

Geminoid HI-1-4 and Geminoid F

Where the Telenoid is intentionally generic, this series of Geminoids mimic individual people. HI-1 through 4 are a series of tele-operated androids that very closely resemble Ishiguro. Actuated by a complex set of pneumatic servos, they follow the facial expressions and motions of their creator in real time, allowing an audience to interact with a virtual stand-in for the man himself.

Even though it is not likely to mistake the HI-2 for its creator while in motion, the robot is remarkably adept at such mimicry, coming very close indeed to conveying a sense of Ishiguro’s personality. Available photos show that HI-4’s face could easily be mistaken for its creator, though its motions are still a touch uneven and forced, somewhat limiting the illusion of human presence.

Geminoid F

This is perhaps Ishiguro’s crowning achievement. Her movements are so fluid and her face so human, that she is difficult to distinguish from her human counterpart. In fact, a recent study found that half of the people tested could not distinguish the android from the real person within five seconds. This is significant because it demonstrates that Ishiguro has climbed the other side of the uncanny valley, conquering the curse of the nearly human. Given advances in 3D modelling, very soon, we can expect life-like replicas of human beings made to order. But we don’t just want sexbots to look real—we want our interactions with them to mimic human relations more fully. And as Ishiguro’s research suggests, we shouldn’t underestimate the need for substantive interaction as a part of this communication — not just movement and mimicry, but meaningful speech and at least the simulation of emotion.

Recent developments in AI promise a radical transformation of human-machine interaction

Advances in artificial intelligence are sometimes painfully slow, as anyone who has tried to hold a substantive conversation with Apple’s Siri can attest. And even if you talk with an award winning chatbot for a few minutes, it’s still relatively easy to ask it questions that reveal that it’s a machine. But recent developments in IBM’s super-computer, Watson, promise a radical transformation of human-machine interaction. The gold standard of human-robot interaction is natural language processing, which allows the machine to understand ordinary words and sentence structure. On this front, IBM’s thinking machine is a major leap forward: Watson’s natural language interface allows it to speak directly with people, responding to ordinary language with human-like conversation. No special prompts or commands are necessary for Watson to understand you, allowing him to interact with people in revolutionary ways.

Because Watson can understand puns, verbal cues and contextual meaning, it can potentially hold a real conversation. And when its developers mate this language capacity to deep learning, companions that mimic human beings become possible. As Vint Cerf insists, “…natural language processing will lead to conversational interactions with computer-based systems.” Make no mistake, Watson ‘understands’ your conversation. Its deep learning algorithms model human cognition through a hierarchical, nonlinear system of problem solving.

Imagine multiple layers of analysis. Lower levels look for patterns in data and then pass their discoveries up to the next level as input. That input, in turn, is explored for patterns in a similar manner. In essence, a combination of deep and shallow learning is how the human brain works, and such machine deep learning lets thinking machines do some very smart things. For instance, deep learning algorithms for facial recognition might, at the lowest level, recognise some features that resemble an eye. Finding that pattern, the lowest level passes this information up to the next processing level. That level looks for a second eye, an ear, a nose, or a mouth. Finding one, it then passes the problem one level higher, where the algorithm there starts building a map of these features, and so on, until the machine recognises that it is seeing a face and identifies it — all within fractions of a second.

A machine powered by such deep learning could recognise you when you arrive home from work, and by comparing your facial expression and body language against its history of examples, assess your mood. That’s right — very soon a machine will be able to tell if you’ve had a bad day at work!

Natural language processing and deep learning promise smart, beautiful sexbots in the next two decades

The next step would be to place this sort of machine personality in a humanoid body. In the future, you’ll be able to have a hyper-real, hyper-attractive robotic companion to meet your emotional and sexual needs. Can you imagine a future in which the mind of Her is mated with a machine body with all the right parts? This sounds unbelievably futuristic, but Watson has shrunk by a factor of 24 since it was first online, going from an area the size of a room to the size of a few pizza boxes in about a decade. With microprocessors moving to the quantum level, a very smart artificial intelligence will be small enough to be housed in a human sized body very, very soon.

In the near future, you’ll be able to have conversations with androids, conversations that will be every bit as nuanced and complex as those you now have with your flesh and blood friends. Androids will soon leap from the pages of science fiction into our lives and homes, and when they do, it will be advances people like Ishiguro who are responsible. The advancements in appearance, natural language processing and deep learning promise smart, beautiful sexbots in the next two decades.